[1] T. Afouras, J. S. Chung, A. Senior, O. Vinyals and A. Zisserman, "Deep audio-visual speech recognition," IEEE Transaction on Pattern Analysis and Machine Intelligence, Vol. 44, No. 12, pp. 8717–8727, 2018.

[2] S. Petridis and M. Pantic, "Deep complementary bottleneck features for visual speech recognition," ICASSP, p. 2304–2308, 2016.

[3] M. Wand, J. Koutn and J. Schmidhuber, "Lipreading with long short-term memory," ICASSP, p. 6115–6119, 2016.

[4] S. O. Kim and K. H. Lee, "Design & implementation of speechreading system using the face feature on the korean 8 vowels," Korea Society of Computer and Information Winter Conference, pp. 135–140, 2008.

[5] M. A. Lee, "A lip-reading algorithm using optical flow and properties of articulatory phonation," Journal of Korea Multimedia Society, Vol. 21, No. 7, pp. 745–754, 2018.

[6] J. Deng, J. Guo, Y. Zhou, J. Yu, I. Kotsia and S. Zafeiriou, "RetinaFace: Single-shot multi-level dense localisation in the wild," CVPR, p. 5203–5212, 2020.

[7] A. Graves, S. Fernández, F. Gomez and J. Schmidhuber, "Connectionist temporal classification: Labeling unsegmented sequence data with recurrent neural networks," ICML, p. 369–376, 2006.

[8] T. Stafylakis and G. Tzimiropoulos, "Combining residual networks with LSTMs for lipreading," Interspeech, pp. 3652–3656, 2017.

[9] K. He, X. Zhang, S. Ren and J. Sun, "Deep residual learning for image recognition," CVPR, p. 770–778, 2016.

[10] J. S. Chung and A. Zisserman, "Lip reading in the wild," ACCV, p. 87–103, 2016.

[11] J. S. Chung, A. Senior, O. Vinyals and A. Zisserman, "Lip reading sentences in the wild," CVPR, 2017.

[12] J. S. Chung and A. Zisserman, "Lip reading in profile," BMVC, p. 155.1–155.11, 2017.

[13] T. Afouras, J. S. Chung and A. Zisserman, "LRS3-TED: a large-scale dataset for visual speech recognition," In arXiv preprint arXiv:1809.00496, 2018.

[14] W. Liu, D. Anguelov, D. Erhan, C. Szegedy, S. Reed, C. Y. Fu and A. C. Berg, "SSD: Single shot multibox detector," ECCV, p. 21–37, 2016.

[15] J. Yuan and M. Liberman, "Speaker identification on the scotus corpus," Journal of the Acoustical Society of America, Vol. 123, No. 5, pp. 3878–3882, 2008.

[16] J. S. Chung and A. Zisserman, "Out of time: automated lip sync in the wild," Workshop on Multi-view Lip-reading, ACCV, pp. 251–263, 2016.

[17] T. Stafylakis and G. Tzimiropoulos, "Combining residual networks with lstms for lipreading," Interspeech, 2017.

[18] Y. M. Assael, B. Shillingford, S. Whiteson and N. Freitas, Lipnet: Sentence-level lipreading, arXiv: 1611.01559. 2016.

[19] B. Shillingford, Y. Assael, M. W. Hoffman, T. Paine, C. Hughes, U. Prabhu, H. Liao, H. Sak, K. Rao, L. Bennett, M. Mulville, B. Coppin, B. Laurie, A. Senior and N. Freitas, "Large-scale visual speech recognition," Interspeech, pp. 4134–4139, 2019.

[20] X. Zhang, F. Cheng and S. Wang, "Spatio-temporal fusion based convolutional sequence learning for lip reading," ICCV, p. 713–722, 2019.

[21] A. Gulati, J. Qin, C. C. Chiu, N. Parmar, Y. Zhang, J. Yu, W. Han, S. Wang, Z. Zhang, Y. Wu and R. Pang, "Conformer: Convolution-augmented transformer for speech recognition," Interspeech, pp. 5036–5040, 2020.

[22] P. Ma, S. Petridis and M. Pantic, "End-to-end autio-visual speech recognition with conformers," ICASSP, p. 7613–7617, 2021.

[23] H. Dinkel, S. Wang, X. Xu, M. Wu and K. Yu, "Voice activity detection in the wild: a data-driven approach using teacher-student training," IEEE/ACM Trans. on Audio, Speech and Language Processing, Vol. 29, pp. 1542–1555, 2021.

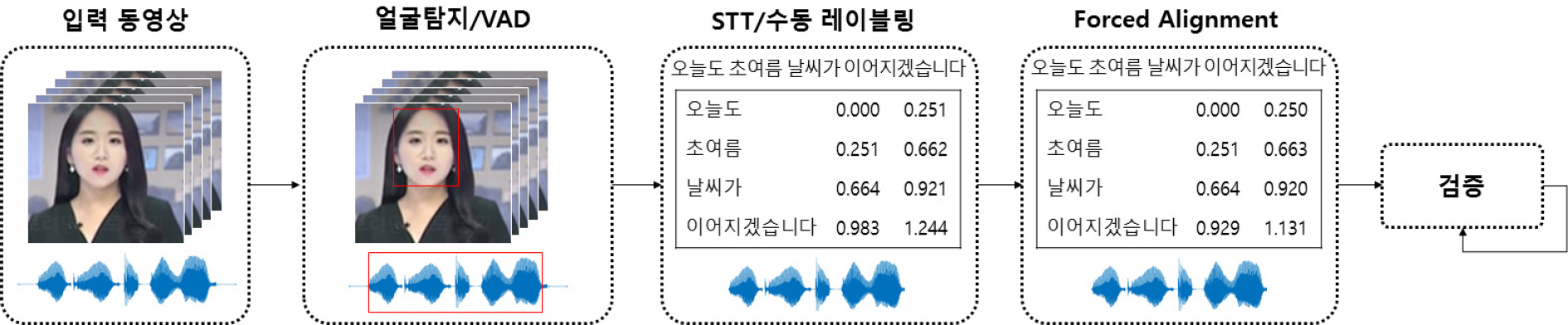

[24] M. McAuliffe, M. Socolof, S. Mihuc, M. Wagner and M. Sonderegger, "Montreal Forced Aligner: Trainable text-speech alignment using Kaldi," Interspeech, pp. 498–502, 2017.

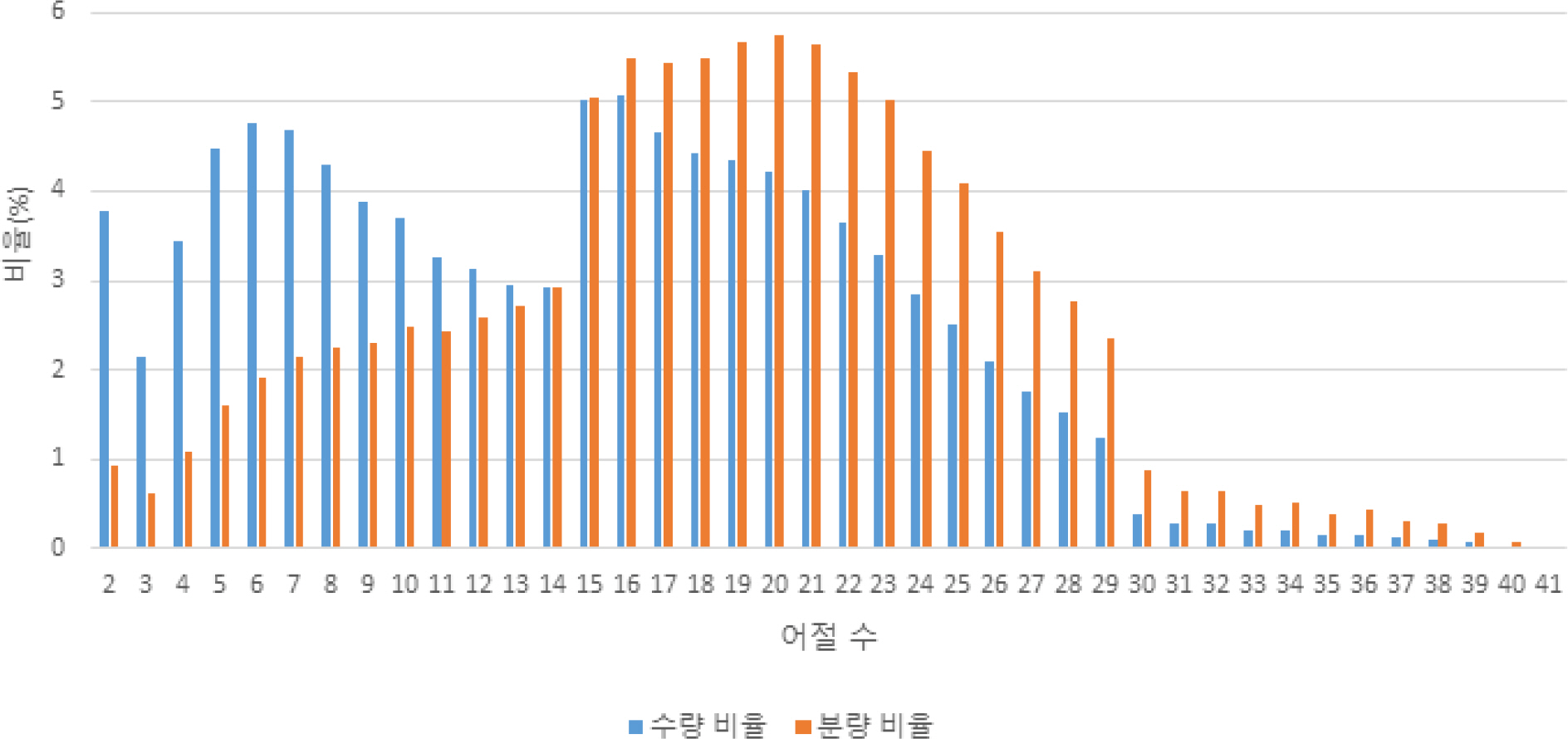

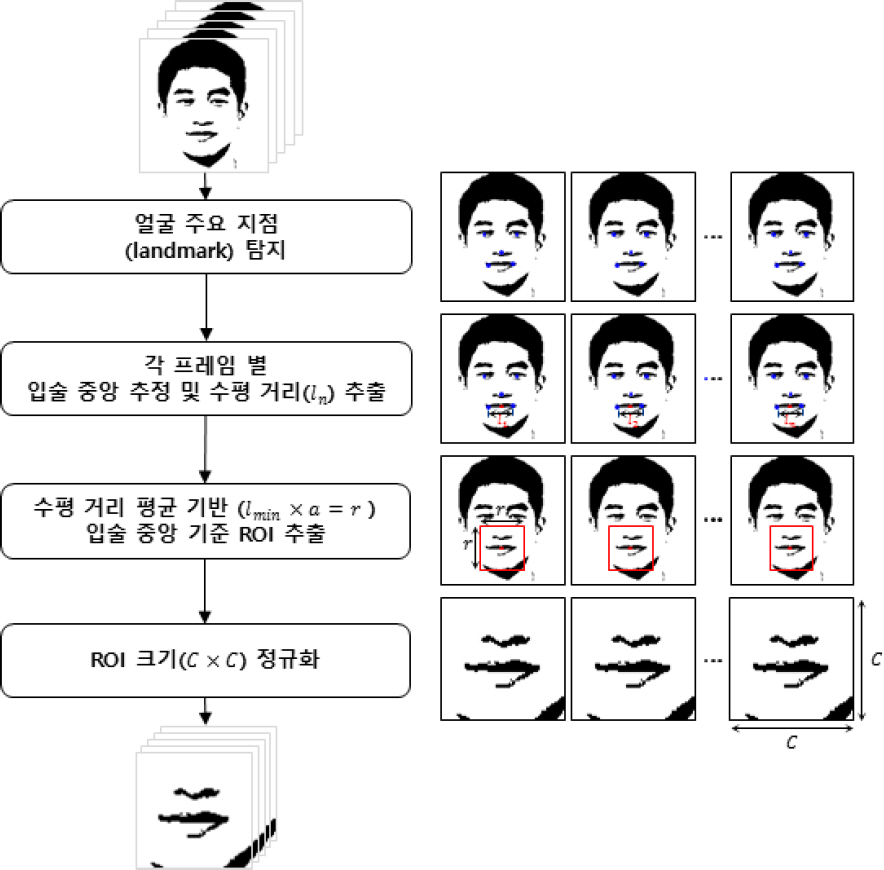

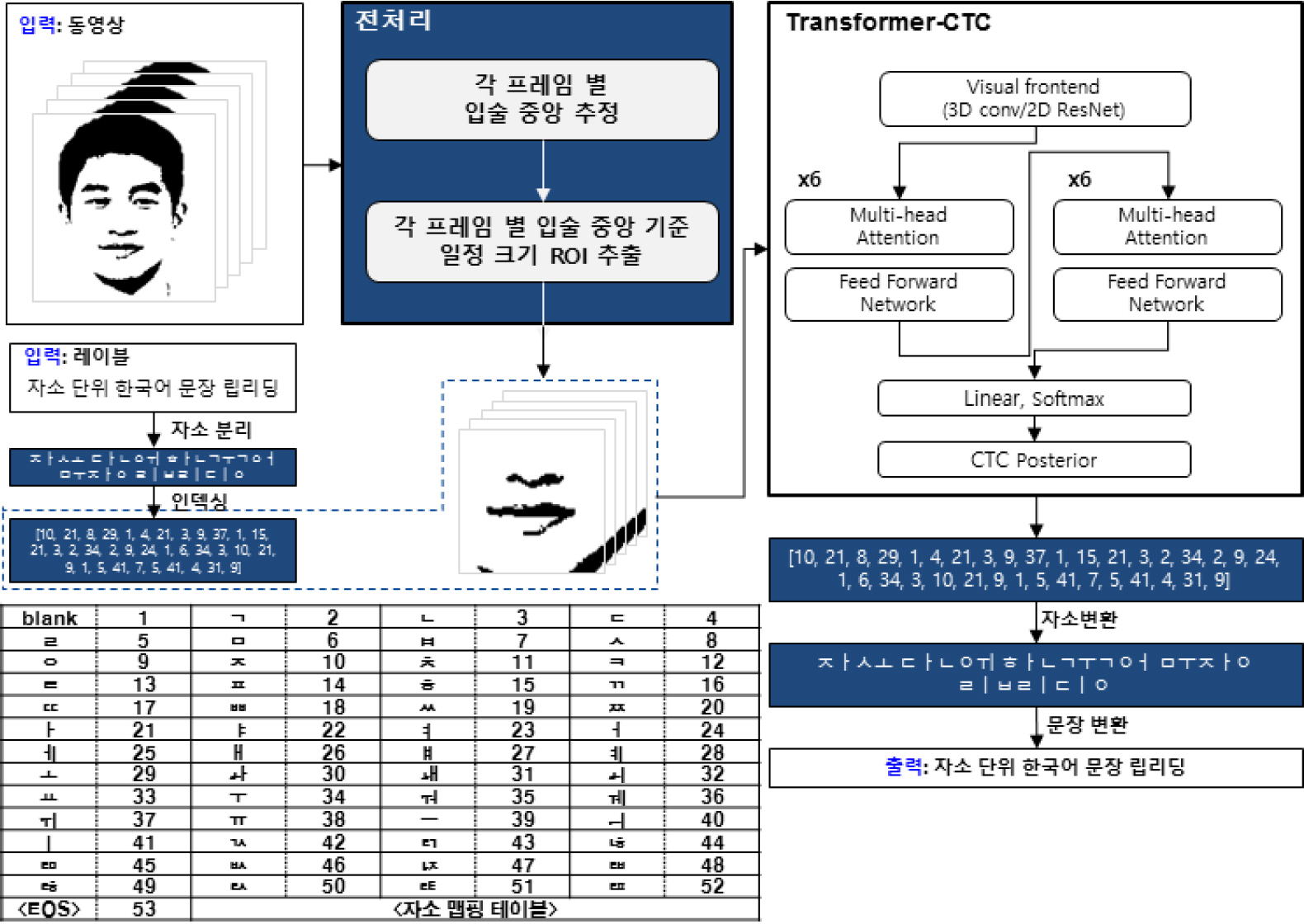

[25] S. S. Yoon, T. Y. Chun, D. J. Jung and H. S. Song, "A study on data preprocessing for lip-reading of national defense data," KIMST Annual Conference, pp. 367–368, 2022.